Five Things You Need to Know Before Writing Multiple Choice Questions for Your Next Elearning Course

Do you write multiple-choice assessment questions?

If you're reading this, you likely do or you will soon. And I'm glad you found this article because according to a recent Instructional Designers in Offices Drinking Coffee (IDIODC) podcast guest, writing bad test questions can do more harm than good.

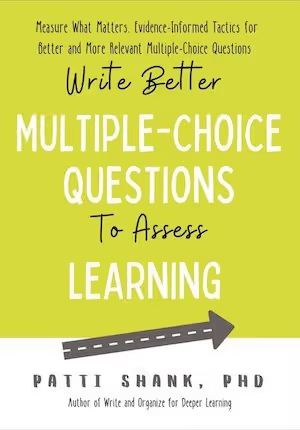

Dr. Patti Shank, author of Write Better Multiple-Choice Questions to Assess Learning was our guest that episode. She opened our eyes to the importance of writing effective test questions in general and multiple-choice questions specifically.

I've pulled out five important tips from the book to highlight here, but rest assured, these only touch on the book’s full list of important tips you need to truly level up your multiple-choice question writing game.

Multiple-Choice Tip 1: Connect with Learning Objectives

For any academically-trained instructional designer this feels like a DUH! moment. Of course, our assessments should be tied to learning objectives. And so, you might be tempted to skip this section of the book. I urge you not to.

Patti makes a great case for not only having learning objectives, but also pushing for professionally written objectives. And even spending time rewriting weak objectives you may have been given. This can be tricky if you are dealing with sensitive stakeholders, subject matter experts (SMEs), or clients. However, you do not need to tell them you are rewriting the objectives. You can use it as an opportunity to ask follow-up questions and rewrite them for yourself to make writing your multiple-choice questions easier, and better.

For those who may not be academically training at instructional design the book guides you through a few exercises to help you learn how to write learning objectives. Patti introduces you to the ABCD method of writing learning objectives and offers a few examples for you to practice. Do not skip this part!

Patti reminds us that your multiple-choice questions are only as good as your Learning Objectives. Therefore, refreshing your memory, or learning about them for the first time, is important as you seek to improve the effectiveness of your assessments.

dominKnow | ONE User Bonus

dominKnow | ONE users who take advantage of xAPI can also take advantage of Learning Objective mapping within the platform.

dominKnow | ONE’s Learning Objective mapping allows learners to be marked complete for courses content that they have already encountered outside of a course, such as in a knowledge base. Learn more about Learning Objective Mapping in dominKnow |ONE!

Multiple-Choice Tip 2: Understand the Structure of a Good Multiple-Choice Question

This is the question, and these are the answers. It is that easy right?

Well, sort of.

There is a little more to it because instructional designers need to be able to communicate with course developers (if your team is lucky enough to have separate roles!). And if you are the eLearning developer it is important that you understand the structural elements of multiple-choice questions, too.

As a developer you may be given multiple-choice questions in a Word doc, Excel spreadsheet, or even in a PowerPoint file. It doesn't matter what digital format is used if there is a consistent structure that you understand and can convert the questions into your eLearning authoring tool.

Structure

Here’s the basic structure for a multiple-choice question, whether it’s in an elearning course or in some other format:

Stem:

- Answer 1

- Answer 2*

- Answer 3

There are correct answers and incorrect answers. If you’re an instructional designer writing multiple-choice questions then you need to communicate which is which with your elearning developers. Correct answers are called the Key and incorrect answers are called distractors.

Patti’s book walks through the research, examples, and practice of writing good keys and distractors. She answers questions like:

- How many answer options are best?

- Is “none of the above” or “all the above” a useful option?

Spoiler alert! “All the above” and “none of the above" are bad options.

The Stem

Writing a good Stem is not as easy as it looks!

Research shows several different mistakes that cause confusion for the test taker. For an instructor, or subject matter expert, writing a few quick test questions can seem simple and obvious. But after you get through three chapters of the book you'll begin to realize how easy it is to write Stems that cause more confusion than they should. But the book also helps you correct those errors.

dominKnow | ONE provides significant flexibility to accommodate for all design possibilities based on your objectives. The dominKnow community is filled with articles and “How To's” on authoring your course assessments with the specific settings you need.

A Common Structural Mistake

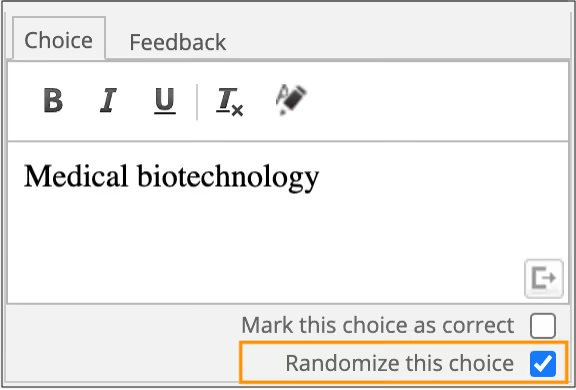

Patti points out that, “Multiple choice question writers may unintentionally put correct answers in the same answer choice position. Be sure to intentionally move the position of the correct answer so the correct answer position isn’t easy to guess.”

This is not a concern when using dominKnow | ONE because you can set your answers to appear in random answer positions each time the question page is loaded.

For more information about editing multiple choice question pages in dominKnow | ONE read this article.

Multiple-Choice Tip 3: There Are Different Multiple-Choice Question Formats

I'll admit I was pleasantly surprised at how many distinct types of multiple-choice questions there are.

There is the standard multiple-choice question, the multiple-choice question with multiple correct options, and, do not forget that True or False is also basically a multiple-choice question.

But here is where it gets interesting. There are also multiple true false, matching, and item sets. Reading the examples Patti shares gave me so many more options than I have yet considered in my test writing.

Unsure what multiple true false is? Check out this example created in dominKnow | ONE’s Flow authoring option.

Matching is a quite common question type. In my experience they're often portrayed as quite different from multiple choice questions. But they really are just a different format of multiple choice. Patti also points out that, “matching is good to assess recall and understanding but typically not good to assess higher-level thinking.”

And Item Sets are like multiple-true-false but, as you might have guessed, each additional stem, based on a situation, has standard multiple-choice options.

There is also the Complex question format but I’m going to leave that one for you to discover in the book.

Multiple-Choice Tip 4: Use the Best-Aligned Types and Frequency of Feedback

There are several different methods for providing feedback.

You may choose to provide no feedback at all. Or you may deliver feedback as soon as a selection is made, or wait until the user submits the answer they have chosen. This, of course, assumes a computer-based instructional solution. If a live instructor is involved then different feedback options will apply.

The research is mixed regarding when and how to give feedback. Patti has done the arduous work of reviewing the research and offering some tips and best practices for you to follow. The good news is that the research is clearer when specifically referring to computer-based instruction or self-paced eLearning courses.

The best feedback approach within computer-based instruction depends on several factors:

- Level of expertise

- Task level

- Level of prior knowledge.

Patti provides a simple chart in the book.

Multiple-Choice Tip 5: Write Effective Instructional Text

Do you really need to tell users how to answer a multiple-choice question?

Absolutely.

It can be as simple as “Select the correct answer.” Or “Select the best answer.” However, be careful when choosing “best” over “correct”. You 'll need to be certain you are, “providing enough detail for the test taker to distinguish between best and less good,” Patti notes.

But what if you allow multiple answers to be selected?

This is the multiple correct answer type mentioned in Tip #3 above. Research originally pointed in the direction of avoiding this type of question. However, Patti found there are some cases with real world tasks where multiple items need to be recalled or selected. Her examples in the book help clarify the point.

Patti also points to research regarding other aspects of assessment instructions. Giving details about how the test is being scored is recommended and leaving out that information is problematic for the validity of your test.

Other elements you should include in your instructions are:

- Length of test

- Purpose of test

- Time it will take on average to complete

- Feedback

- Number of attempts, and more.

The basic idea is giving enough instruction in your introduction to the test, so that taking the test does not become a barrier to answering the test questions thoughtfully.

Do Your Multiple-Choice Questions Pass the Test?

Elearning authoring tools help us build assessments quickly and easily.

This is both a blessing and a curse. Tools can often make it too easy to write bad multiple-choice questions.

However, just as bad presentations should not be blamed on tools like PowerPoint, confusing and ineffective test questions should never be blamed on the authoring tools. It’s our responsibility as instructional designers to also be a good writer of multiple-choice questions for our elearning courses.

And Patti’s book Write Better Multiple-Choice Questions to Assess Learning is a must-read for all of us.

Want to see how dominKnow | ONE can help you create better multiple-choice questions for your elearning courses? Sign up for a free trial today.

.svg)